OPC UA Information Modeling Best Practices: What the OPC Foundation Gets Right — and What It Still Misses

The OPC Foundation’s UA Modelling Best Practices whitepaper is a valuable reference for OPC UA information modelers. It brings discipline, consistency, and long-term compatibility guidance. But there is a clear limit: compliant modeling rules are not the same thing as industrial architecture.

Introduction

The OPC Foundation recently published the UA Modelling Best Practices whitepaper (OPC 11030), a 2026 guidance document intended to help information modelers create OPC UA-based information models more consistently and more safely over time. The document is explicitly presented as a whitepaper and not as a formal specification, but its recommendations are clearly meant to influence how Companion Specifications and vendor models are designed. This whitepaper matters because it addresses real modeling topics: naming conventions, backward compatibility, method design, data granularity, Companion Specifications, deprecation, conformance units, and how to use OPC UA modeling concepts.

That said, the document has a clear boundary. It explains how to avoid obvious modeling mistakes. It does not explain how to build a robust industrial middleware architecture.

That distinction matters.

A model can be compliant and still be weak in production. A model can be elegant on paper and still fail when deployed at scale across heterogeneous industrial environments.

Naming conventions are necessary — but they are not architecture

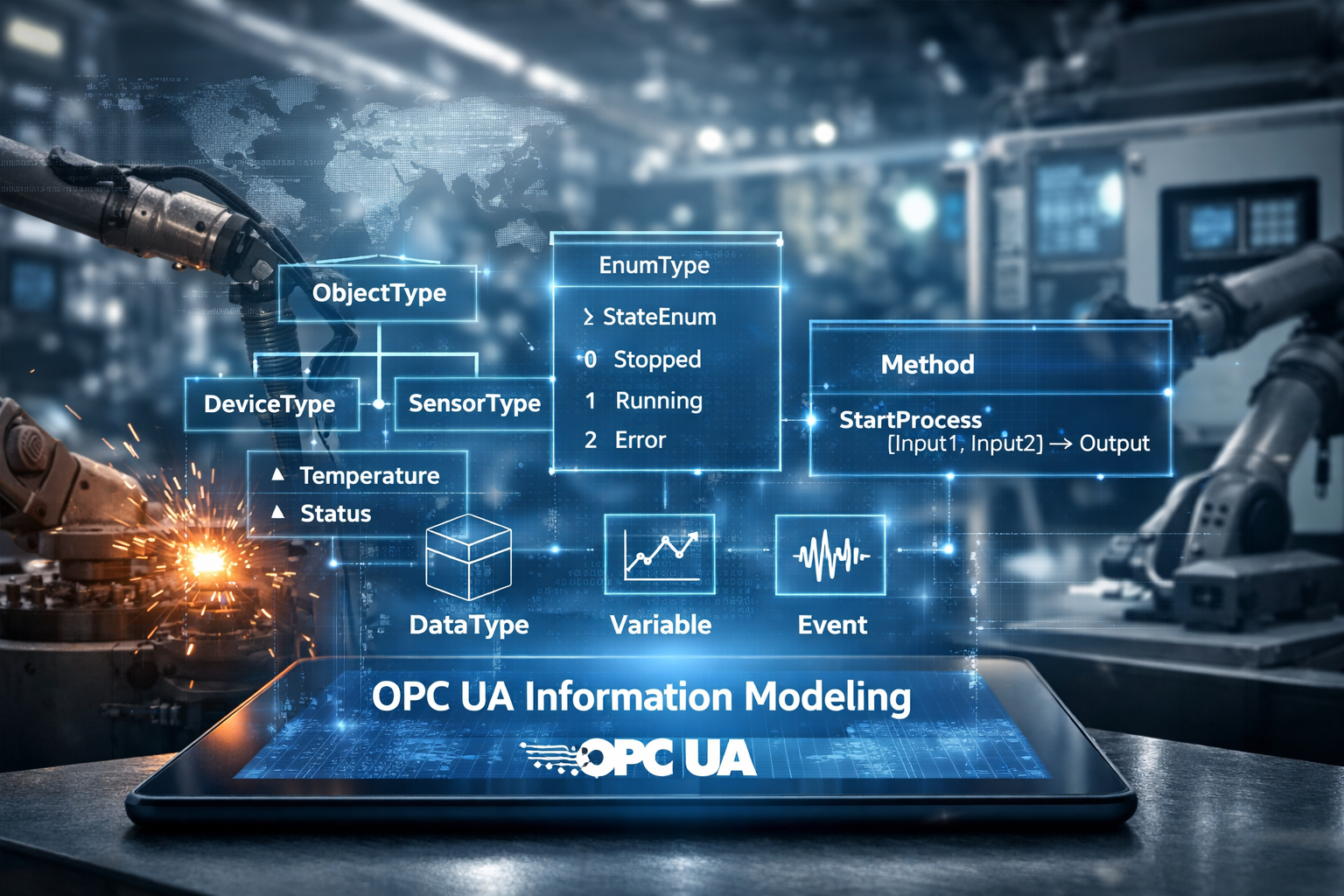

The whitepaper strongly recommends consistent naming conventions for OPC UA Nodes, structure fields, enums, UANodeSets, NamespaceUris, and Method arguments. BrowseNames should use PascalCase. ObjectTypes and VariableTypes should typically end with Type. Structured DataTypes should use the suffix DataType. ReferenceTypes should express relationships clearly, typically as verbs such as HasComponent or Organizes.

These rules are useful. They improve readability, reduce ambiguity, and support tooling.

But they should not be overvalued.

A well-named model is not automatically a good model. Naming conventions improve hygiene. They do not solve semantic weakness, poor decomposition, or architectural incoherence. Many industrial models look clean at the BrowseName level while remaining badly structured in practice.

The whitepaper is right to insist on naming discipline. It would be wrong to confuse that discipline with modeling quality.

Backward compatibility is essential — and also a source of long-term complexity

The strongest part of the document is its treatment of backward compatibility. The whitepaper is explicit: an information model is a contract between OPC UA applications, and changes must be controlled if the same NamespaceUri is reused. It states that new mandatory components shall not be added to existing ObjectTypes or VariableTypes, Enumeration values shall not be added or removed, Structure fields shall not be changed, Method signatures shall not change, Variable DataTypes shall not change, and previously defined Nodes shall not be removed.

The logic is sound. Without these rules, interoperability collapses.

The problem is elsewhere: strict backward compatibility often pushes model authors toward endless accumulation instead of controlled redesign. Optional additions, subtypes, replacement types, and new namespaces are all valid mitigation strategies, and the whitepaper recommends them.

But the real industrial consequence is obvious. Over time, models tend to become heavier, less readable, and less coherent. The document prevents breaking changes. It does not prevent structural drift.

That is not a minor point. In industrial systems, complexity is not an academic inconvenience. It directly affects maintainability, onboarding, implementation cost, and long-term reliability.

Inheritance is useful, but real industrial objects are multi-aspect by nature

The whitepaper recommends a clear distinction between inheritance and composition. Subtyping should refine the main characteristic of an ObjectType. Additional aspects should be introduced through composition, using Interfaces or AddIns. It also warns against creating deep and overly complex type hierarchies.

This guidance is correct.

The limitation comes from OPC UA itself: there is no multiple inheritance for ObjectTypes. As a result, once industrial objects combine several functional dimensions — process role, device behavior, diagnostics, configuration, maintenance, quality, contextual relations — inheritance alone becomes insufficient.

The whitepaper acknowledges this indirectly when it explains that composition is required to model additional aspects that do not fit into a single hierarchy.

That is where industrial reality begins to diverge from specification simplicity.

In actual systems, composition is not a secondary design option. It is often the only realistic way to build scalable models. The challenge is then no longer “how to define a type” but “how to maintain semantic clarity while assembling many interoperable aspects into a runtime information space.”

That is already architecture, not just modeling.

Optional versus mandatory: a specification compromise, not an industrial answer

The whitepaper discusses the tension between consumer expectations and provider constraints. From the client side, mandatory functionality is desirable because it guarantees availability. From the server side, optional functionality is often easier to implement across diverse products. The document therefore recommends using optional elements where support cannot be guaranteed, while noting that optional features can later be made required through conformance units and profiles.

This is sensible from a standardization perspective.

But optionality comes at a cost. When too much of a model becomes optional, clients can no longer rely on it operationally. The model remains technically valid, yet functionally weak. In industrial deployments, predictable behavior matters more than theoretical flexibility.

This is where a purely specification-driven mindset becomes insufficient. A useful industrial model must not only be extensible. It must also be dependable.

Data granularity is where the whitepaper becomes genuinely practical

One of the most relevant sections of the document compares several ways to represent structured information in OPC UA: individual Variables, one Variable using a structured DataType, a hybrid model combining both, Methods, and Events. The whitepaper explains the trade-offs of each pattern with a concrete example around IP configuration.

This section is strong because it addresses a real implementation question rather than a purely formal one.

The document makes the strengths and weaknesses clear. Individual Variables are easy for generic clients but weak for transactional consistency. A single structured DataType preserves atomicity but is less transparent. Methods provide controlled access but eliminate subscription behavior. Events are contextual and transactional but not appropriate as a general state access mechanism. The hybrid approach combines transactional access for specific clients with browsable simple elements for generic clients.

That conclusion is solid.

In industrial systems, the hybrid model is often the most useful because it supports both semantic richness and practical accessibility. It is more demanding on the server side, but it is usually the only pattern that remains usable across diverse client profiles and operational needs.

Properties, DataVariables, and Methods must be chosen for use, not for elegance

The whitepaper clearly distinguishes Properties from DataVariables. Properties are lightweight Variables that describe the Node they belong to and are intended as metadata. DataVariables are the right mechanism when the information is part of the actual modeled reality and may need richer structure or extensibility. The recommendation is pragmatic: use Properties when they clearly describe node characteristics and use DataVariables otherwise.

It also states that Method signatures shall not be changed, and that if application-specific statuses are needed, they should be returned through output arguments rather than by extending StatusCodes. The proposed pattern is to keep StatusCodes standard and return detailed application status in a dedicated output argument, typically an Int32.

These recommendations are technically sound.

But they also reveal the limit of a rule-based approach. In practice, model quality depends less on whether a designer can classify something as a Property or a DataVariable and more on whether the resulting information space remains intelligible, maintainable, and operationally useful.

Good modeling is not about elegance alone. It is about what clients, engineers, and systems can do reliably with the model.

Companion Specifications improve consistency — but they do not solve runtime architecture

The whitepaper also covers the process of creating Companion Specifications, the use of templates and validators, the handling of UANodeSets, and the importance of conformance units, profiles, and test cases. It strongly recommends testing early because the definition of test cases often reveals modeling flaws or inconsistencies.

That recommendation is entirely justified.

Testing is not just a certification exercise. It is one of the fastest ways to expose weak semantics, ambiguous behavior, missing rules, and poor assumptions in an information model.

However, even a well-tested Companion Specification does not automatically produce a good industrial runtime architecture. The whitepaper helps define interoperable models. It does not address how those models behave inside a scalable middleware, across dynamic configurations, multiple protocol adapters, distributed deployments, or large semantic address spaces.

That gap is not a flaw in the document’s intent. But it is a hard limit in its practical reach.

What the whitepaper gets right — and what it still misses

The OPC Foundation whitepaper is valuable because it formalizes important rules that too many models ignore:

- naming discipline,

- backward compatibility,

- responsible use of ObjectTypes and DataTypes,

- modeling trade-offs,

- deprecation discipline,

- and the need for testing.

That is real progress.

But the whitepaper remains focused on model correctness at specification level. It does not address the industrial questions that decide whether a system succeeds:

- How does the model evolve operationally?

- How is it instantiated at scale?

- How are semantic layers maintained across heterogeneous protocols?

- How does the architecture remain understandable over time?

- How are runtime behavior, maintainability, and deployment constraints handled?

Those are not secondary issues. They are the decisive ones.

Conclusion

The UA Modelling Best Practices whitepaper should be read. It is a serious document, and many of its recommendations are correct and useful. It helps modelers avoid obvious mistakes and gives a stronger common base for future Companion Specifications and vendor information models.

But it should also be read with a clear understanding of its scope.

It explains how to model more safely. It does not explain how to build an industrial OPC UA architecture that remains coherent, scalable, and operationally effective over time.

That second step is where the real engineering begins.

At OpenOpcUa, this distinction matters. Information modeling is not just about producing compliant NodeSets. It is about structuring an industrial semantic runtime that can actually support implementation, evolution, and deployment in the real world.

Reference

OPC Foundation, OPC 11030 – OPC Unified Architecture: UA Modelling Best Practices, Release 1.03.03, 2026-03-23.